Tales of a SysAdmin

Having worked in I.T. for over 20 years, I have accumulated tales of woe that I thought I would share for your enjoyment. A "This is Spinal Tap" of IT. The names of guilty parties have been omitted to avoid embarrassment; although a number of the companies I have worked for no longer exist. And no, it was not my fault!

NT4 Roll-out

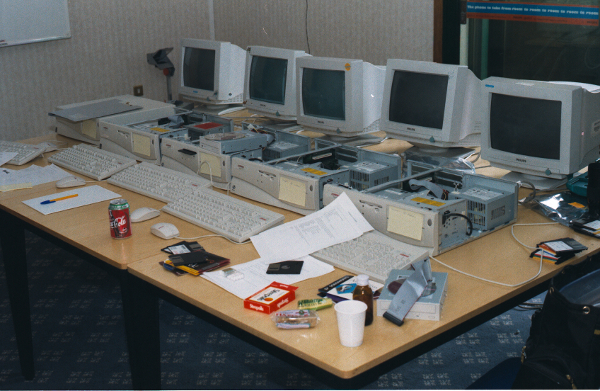

We are somewhat spoiled with PXE / UEFI network booting these days. How about this instead? Late 90s, working in an office near Heathrow airport, rolling-out Windows NT 4.0 to the desktops. Set-up in a spare office on the 3rd floor, going from machine to machine with CD roms and 3.5 inch floppy discs. Throat infection was optional!

The view from the 3rd floor was impressive. Straight over to LHR where you could watch aircraft landing or taking off. Concorde was always an experience. Impressively loud, with nothing but car-alarms screaming the length and breadth of the airport after every take-off. The re-heat was extra crackly on rainy days!

A note to young people who might be marvelling at this old way of doing things. No, these images are not taken on a smartphone, as they had not been invented. These images were taken with a film camera.

Duplex

This tale takes us back to the late 90s when 100 Mb/s Ethernet was fast and new. Working for an IT out-sourcing company, I had been dropped into a new building in Cambridge that now housed three bits of soon-to-be sold-off Philips. The "core" of the network was two 3Com managed switches. Into those was plugged a Cisco router, and into that, the BT fibre-optic kit that supplied the super-fast (for the time) 256 kb/s tie-line.

Every now and then, the link to the outside world would fall over for a few minutes before recovering. Random faults are always a nightmare to track. I was the lowly Desktop Support Analyst and it was above my pay-grade. We had network gurus who were paid the big bucks to sort that out. Not my place to touch it!

One weekend, with no warning to us in desktop-support, the networking gurus arrived on site with BT in tow. BT replaced their kit, and our networking gurus replaced the Cisco router. All fixed... Monday rolls around, and the connection flips out. Coming from an Electronic Engineering background, I have somewhat better skills at fault finding. I looked-up the specs for the Cisco router and found its 10 Mb/s port could only handle half-duplex (ask an old person!). It was attached to a 3Com switch that was trying to detect what speed it should run at - its ports being capable of 100 Mb/s full-duplex. When the link became too busy, packets collided and the 3Com switch would flip the port whilst it tried to work out what speed it should be running at.

So the lowly Desktop Support Analyst set the 3Com switch port to manual and locked it at 10 Mb/s half-duplex. Problem solved! The network gurus were suitably un-impressed and offered no thanks for a job well done!

Y2k

Pundits like to claim that nothing happened with the year 2000 date change. That is thanks to teams of IT people who worked tirelessly in the months running up to December 31st, running around with boxes of 3.5 inch floppy discs patching the hell out of anything that resembled IT kit. Not everything was rosy, mind!

On the same site as above, the IT Manager receives a telephone call from the old York Street site. In order to avoid any disasters, they are going to power-off the AS/400 mainframe over the holiday. The IT Manager asked if I "was planning to power anything off on our site?" "Nope!" was the reply. I come from an electronics background, and I had an idea of what was coming...

January 2000* rolls around and the world has not ended. That comes when Unix time runs out! As expected, the site I am looking after has had zero issues, and we are busy wading through several days of emails, and eagerly awaiting the new series of Friends. The IT Manager's phone rings: it's York Street. The AS/400 will be dead for several days. They threw the power breakers to ON and the cold and aged capacitors in the power-supplies did what I was expecting: promptly exploded, blowing their contents all over the insides of the mainframe. IBM techs would be there when they could. Lots of people had done the same, and predictably, their power-supplies had also let go.

The IT Manager, who had been promoted from data-entry clerk, as there was no-one else left it IT, learned why Electronic Engineers leave things running, especially when they have been powered-up and running for extended periods!

* side note: I am on call for the date-change period - just in case. So I am at home watching the pretty fireworks on CNN (via cable TV) when they pause for an exclusive. They have managed to get hold of the captain of a large US navy vessel out in the Pacific. It is over the International Date line, so via satellite phone, the presenter eagerly asks if they have noticed anything going wrong. "Well ma'am," said the captain, "in proud Naval tradition, all clocks onboard ships at sea are synchronised to Greenwich Mean Time.". I laughed to hard, I fell of the sofa. A lesson in why you should always do your research!

Job ads

Finding a suitable I.T. job can be hard, especially when faced with these ads:

Taken from a long defunct computing magazine:

IT departments are rarely liked, but I have never been called "detrimental"

UPS versus vacuum cleaner

A salutary warning to all SysAdmins who helpfully install UPSes with the sort of mains sockets you find on the wall (or in the floor box). Staff / cleaners / family members rarely bother to check. They see a socket and plug in ... the vacuum cleaner or 2 kW heater. If you are lucky, the UPS will trip-out. If you are unlucky, it is a new UPS!

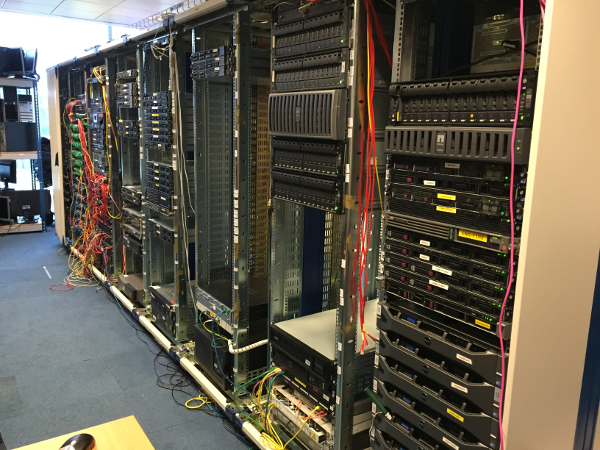

19 inch racking jenga

Never let anyone else install 19 inch racking equipment. A new role in a company with a large number of software developers saw the IT department having to deal with their equipment room. What is the mystery pile of racking rails for in that box? Oh, they are for the servers that are in the racks. One or two of them have been installed with their rails ... the rest are sitting on top. Want to service one of the servers? Be prepared to play server jenga. Not ideal for fingers or players with weak arms!

Needless to say, we took all of their servers off their hands and installed them in our server room, where they were correctly installed with rails, cable management, KVM, and something called an Un-interruptible Power Supply...

Burned out powertrack

It is a fair bet that most office staff have never seen under the floor and have no idea where the magic electricity comes from. You can just plug things in the floor boxes, right? All fine and dandy until your floor plans go out of the window and people start plugging in rack/tables of equipment with no check on the load.

Over-heating elec-track/powertrack from under the floor is an interesting smell. It is even more fun when the 63 Amp breaker refuses to engage, and there are large arcs flashing over the now burned-out track interface. This "fun" has happened in two different offices in which I worked. It is electrical, so it is IT's problem, right? The look on the Facilities Manager's face is always a picture!

Network loops

Beware the humble desktop switch and idle developers: a broadcast storm in the days before loop protection is always a fun thing to track down. Queue standing in a switch room for the developer's floor running Wireshark and pulling connections until the storm ceased, then tracking to the desk to find someone had decided it would look pretty to plug the Cat5 cable in a loop into two sockets. Of course, there were no witnesses at the empty desk!

Thankfully on another site, with loop-protect enabled on all of the HPE switches, testers and software developers were stopped dead in their tracks whenever they tried to cross the network streams!

Rogue DHCP servers

It is the turn of Customer Support to mess with things now. Turning up on site and starting up their laptop, then starting the virtual machines they run when out on a customer's site, they kept forgetting about the DHCP server they had running. Having been starved of cash whilst under the finance department, IT was stuck using early-2000's era 10/100 Mb/s switches for all of the desktop connections. With no dhcp-snooping ability, the Customer Service rep, now locked away in a meeting, would not see the chaos their laptop was creating.

Fast-forward to a time when the new second-hand HPE switches are installed and running dhcp-snooping, and it is now Customer Service reps complaining that their laptop is being booted off the network. Shame!

NTP leap second

Every now and then, when the wind has been blowing in the wrong direction, the Time Lords have to insert a leap-second into UTC to allow the planet to catch-up. Just pause and get your head around that: we are stopping time for 1 second to wait for the planet to rotate so that zero degrees latitude aligns again. Now work out how fast we are spinning!

The leap second should be taken care of by leap-second aware software such as the Network Time Protocol. Leap seconds are usually announced 6-months before they are applied to give people time to sort their kit.

With a leap-second announced, I followed the SysAdmin guide and emailed the company to advise them on the coming time-shift. Knowing certain departments' predilection for running ancient kit that is out of support, I was doing my job as security and stability adviser. One of the staff members who knew better, but did not know me, yelled out "who is this *expletive* (insert name here)". Over here matey! He proceeded to school me in the way NTP works, how leap-seconds are taken care of, and that my email was a waste of time...

A couple of months after the leap-second, we in IT learned that one of the company's customers experienced a serious outage with the kit the company sold. The NTP software certainly takes care of leap-seconds, if your developers bother to include that piece of code in the NTP module on the devices you sell. Thousands of devices had lost time sync for several hours causing all manner of issues with the mobile telephone users trying to make calls. Time-division multiple access tends to fail if the time sync is wrong!

The 10 GbE network is slow!

There is a very good reason to write accurate documentation, especially for your testers who are blindly following the script. So when step 10 of your test requires them to set the MTU to 1200 for that test, it is a good idea to include step 11: reset the MTU to 1500 - and tick the box to confirm you have completed the step. Many, many RT tickets were created with the complaint of a "slow network" and "please do the needful". That would be the same network with 10 Gigabit Ethernet running over fibre-optic!

Mystery kickstart file

Managed Services are working on a new project: rack-mount Dell servers are set to replace huge blade server systems, with everything that ran on the blades now running as a virtual-machine. So the Dell servers need an installation of CentOS 7 to help Managed Services develop a way of deploying the solution to customers. I duly point the people working on it at our in-house PXE boot service, and they install away, as this is only for development ... right...

Weeks later, with one of the MS team retired, the technical writer approaches and asks how many of our customers are likely to have their own PXE boot servers. "Huh?" was my response. The MS team had documented their build, right down to using the PXE server to install everything. Bugger! To help the tech-writer, I create and document ISO images that will perform the same action as the PXE server, and all is well...

More time passes and another work colleague asks "Does this kickstart file look familiar?" Why yes, it's mine from our in-house PXE server. Where did you get it from? "The build-system." Who put it there? "No-one knows!" I had made a mistake in my kickstart file and left Europe/London as the timezone as I had been lazy and copied the file from the desktop build. I spotted the error and eventually changed it to Etc/UTC as I always set my servers to UTC. Someone in the company's Indian office had blindly copied the kickstart set-up into the build system, then deployed several servers into an Indian customer. They had noticed the time was off! No-one ever knew, or admitted to how the kickstart file made it into the build system. Documentation was duly updated to remind people to update the timezone!

Horror show

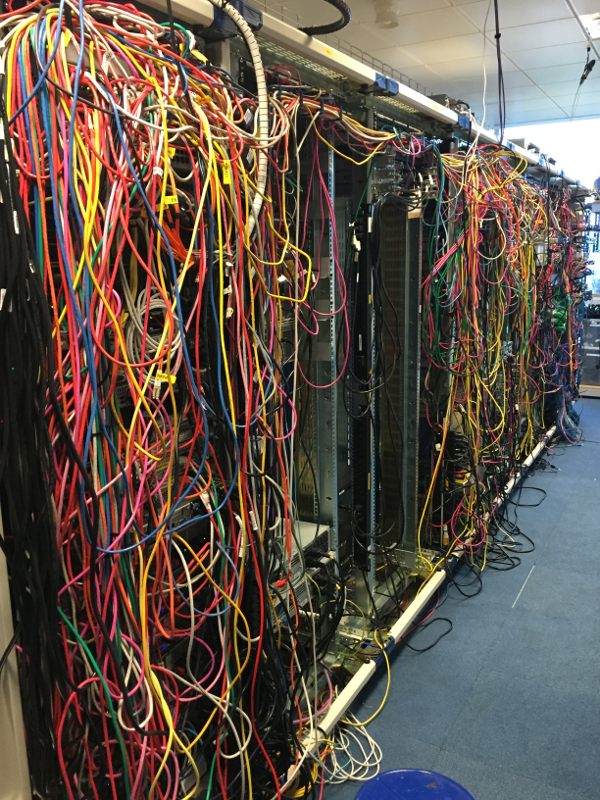

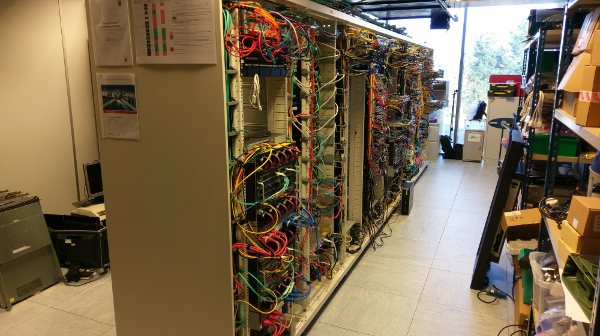

A now defunct server room inherited from others. Carpet (bad for anti-static) and obsolete Cooper B-line racks with no cable management, plus half cut cable ties that liked to slice your arms open.

Following two long weekends, and a lot of effort, we replaced the racks for those made by Dataracks, making good use of their cable management solutions.

Labelling everything makes fault-finding so much easier!

April Fool

A little jape that was also a test of intelligence, emailed around the office on April 1st. You can guess which departments were staffed with snowflakes...

| In order to save money, from today, we will be exchanging all A4 paper in the printers for A5;

and all A3 for A4. Due to the reduction in resolution, we will be supplying contrast enhancing

equipment to everyone. To assist with this contrast enhancement, we will be changing the white

paper for black, and changing the black toner for white. Likewise, cyan will be exchanged for

green, yellow will be exchanged for orange, and magenta is too expensive, so we will no longer

be using her services. Please assist us in this change by gamma-correcting all of your images

to remove magenta. Thank you in advance for your assistance. |

Page updated: 29th August 2021